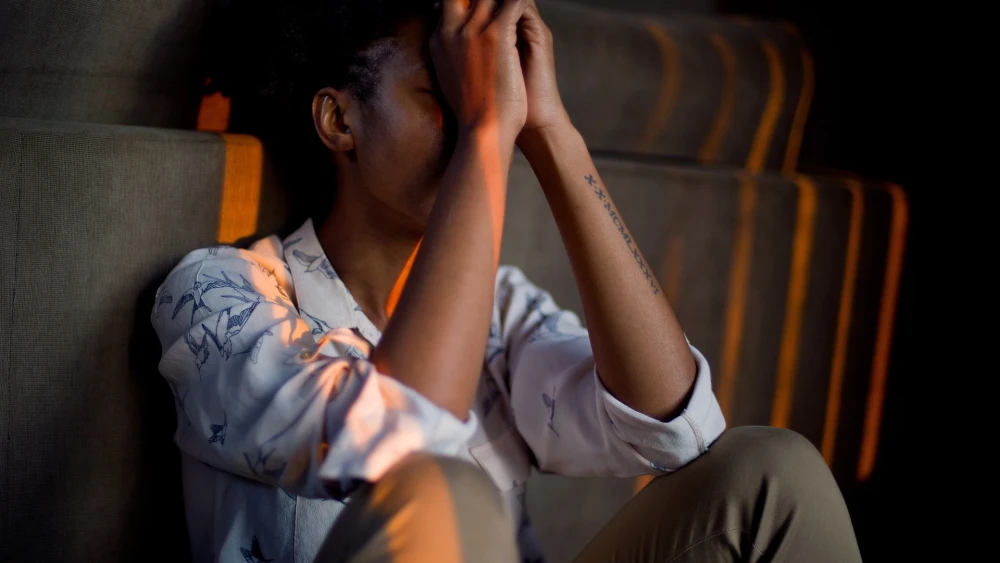

With a growing number of young people living under the strain of war and insecurity, Israeli mental health professionals gathered last week as part of a U.N. event to plot a path forward for reconciling professional counseling services and the artificial intelligence that can help drive the effort, or harm it.

That tension was at the center of a side event organized by Israel’s mission to the United Nations during the annual U.N. Economic and Social Council Youth Forum, held April 14–16 at U.N. headquarters in New York.

Titled “The Role of Artificial Intelligence in Supporting Youth Experiencing Trauma During Times of War,” the session brought together Israeli researchers, clinicians and youth advocates to examine how emerging technologies can expand access to care while safeguarding against potential risk.

“The psychological toll of these realities are immense, and yet so is the ingenuity and resilience of the communities working to respond,” said Avital Rosenberg, counselor for human rights at Israel’s U.N. mission. “Artificial intelligence is emerging as one of the powerful tools that in the response that responds, enabling early intervention, scaling access to care, reducing stigma and providing support when and where traditional services cannot reach. But innovation must be responsible.”

The Youth Forum, a flagship U.N. platform for engaging young people in global policy discussions, convenes government officials, civil society groups and youth leaders to advance solutions tied to the 2030 Sustainable Development Goals.

“For our generation in Israel, trauma isn’t a theoretical concept. It’s a lived reality of navigating war loss and the constant weight of instability,” said Maya Shany, Israeli youth delegate. “Nearly 50% of those who need mental health support cannot access it. This crisis has pushed us to explore artificial intelligence as a useful tool to bridge this divide. However, our experience has taught us that innovation without deep human-centered caution can be as dangerous as the trauma itself.”

Shany and other speakers at the event highlighted ways in which AI can complement, rather than replace, trained psychological care professionals in addressing the trauma of war.

“We must be clear that therapy is fundamentally about human relationships,” she said. “AI should serve as a bridge to human care, helping with logistics, journaling and early detection, while ensuring that safety remains in the hands of trained professionals.”

Talia Meital Schwartz-Tayri, who founded and heads Ben-Gurion University’s Artificial Intelligence for Social Welfare Research Lab, described an application she developed for “psychological first aid.”

“From the beginning, my goal was to explore how we can combine AI with psychosocial interventions and trauma interventions to help people cope more effectively with extremely difficult situations, not only in Israel, and not only to recover, but in some cases, even grow stronger after trauma,” she said.

The application allows users to describe their situation and, within seconds, generates tailored, protocol-based recommendations for first responders.

“The first hours after trauma matter the most, and it is well known that delivering appropriate psychological first aid within the first 84 hours can reduce acute shock and anxiety and help prevent long-term post-traumatic stress,” Tayri said.

She noted that the tool is not a treatment in and of itself but is designed to assist caregivers, not to replace them.

“In trauma intervention, AI should never interact directly with the person in distress,” she said. “Instead, AI should strengthen and foster the clinical capacity of the helper.”

‘Not a replacement for the human soul’

Vered Amitzi, head of research and development at the Israel Trauma Coalition for Response and Preparedness, warned that, with AI’s rapid rise, it’s critical for those in the trauma care field to develop standards for how such mental health apps are developed, estimating that there are thousands on the market.

“There is currently no reliable mechanism locally or globally to verify whether any given tool is clinically sound, culturally appropriate or simply safe,” Amitzi said. “We have seen extreme cases where people used AI as a substitute for professional mental health care with devastating outcomes, including AI systems that provided responses leading to suicide.”

To address those gaps, Amitzi said her organization has partnered with others to develop the Israel Resilience Network, a joint digital platform where partners can contribute educational materials, coping tools and structured programs in a shared, accessible place.

“During acute escalation periods, such as that we just had, we see significant spikes in platform use, precisely when formal services are overwhelmed or inaccessible,” Amitzi said. “This is exactly the gap digital tools must be designed to fill.”

The collaboration has also produced a children’s resilience app developed with Bedouin communities in Israel’s Negev Desert, tailored to local cultural needs and available in Hebrew, Arabic and English.

“This embodies a principle that we hold firmly: innovation is only as good as its relevance to the people that it serves,” she said.

Amitzi proposed a coordinated framework involving government regulation, clinical expertise, technological capacity and academic oversight.

“We can move from a landscape of unverified tools to one of responsible, accountable innovations and accountability requires measurement, not just tracking downloaders or users’ satisfaction, but ongoing evaluation against professional standards,” she said.

Yifat Reuveni, director of research and evaluation at NATAL, an Israeli nonprofit that treats victims of war and terrorism, echoed that the rapid leap of AI integration into mental health services should be closely monitored.

“While AI offers massive potential to scale access, it currently lacks genuine human empathy and physiological co-regulation,” she said. “It is a powerful co-pilot, but still not a replacement for the human soul.”